Spectre of the commons: Spectrum regulation in the communism of capital

Keywords

- abstract

The past decade has seen a growing emphasis on the social and juridical implications of peer production, commons-based property regimes and the nonrivalrous circulation of immaterial content in the online domain, leading some theorists to posit a digital communism. An acquisitive logic, however, continues to operate through intellectual property rights, in the underlying architecture that supports the circulation of content and in the logical apparatuses for the aggregation and extraction of metadata. The digital commons emerges, not as a virtual space unfettered by material exploitation, but as a highly conflictive terrain, situated at the centre of a mode of capitalism that seeks valorisation for the owners of network infrastructure, online platforms and digital content. Using a key example from core infrastructure, this paper will explore how controversies surrounding the management of the electromagnetic spectrum provide insight into the communism of capital in the digital domain. This paper proceeds in two parts: The first is historical, exploring how the history of spectrum management provides a lucid account of the expropriation of the digital commons through the dispossession and progressive deregulation of a communicative resource. The second considers current transformations to spectrum regulation, in particular the growing centrality of shared and commons spectrum to radio policy. Does a shift towards non-proprietary and unlicensed infrastructure represent an antagonistic or subversive element in the communism of capital? Or, if this communality of resources is not at odds with capitalist interests, how is it that an acquisitive logic continues to act?

Introduction

If we speak of ‘the commons’ today as a general phenomenon, this has a lot to do with the modes of production, consumption and distribution that have emerged over the past decade around information and communication technologies. Though ‘the commons’ exists in both material and immaterial spheres, and has a legacy beyond the network, recent technological transformations are identified as a core actor in the hegemony of commons-based peer-production. The facility to leverage communicative capacities, support non-hierarchical cooperation and enable the circulation of non-proprietary content, has led a number of theorists to posit a ‘virtual communism’ (Lessig, 2004; Benkler, 2006; Kelly, 2009). This traces an immaterial space that trades in knowledge and culture, at once free from commercial subjugation and conversely capable of exerting influence on the material substrate of capital.

Such ‘virtual communism’ is, to echo Virno, ‘a communality of generalized intellect without material equality’ (Virno, 2004: 18). The underlying architectures that support the circulation of content are still proprietary. While user-generated content becomes increasingly central to the economy, the possibility of a ‘core commons infrastructure’, as Benkler (2001) calls it, is constrained by a variety of institutional, technical and juridical enclosures. The digital commons emerges, not as a virtual space unfettered by material exploitation, but as a highly conflictive terrain. The commons is situated at the centre of a mode of capitalism that seeks valorisation for the owners of network infrastructure, digital platforms and online content. This proprietary interest is diffuse, and increasingly so; it blends in a series of highly confluent mechanisms the essence of ‘the commons’ with new forms of enclosure.

Today we encounter conditions in which the core tenets of communism – the socialisation of production, the abolition of wage labour, and the centrality of commons-based peer-production – are remade in the interests of capital (Virno, 2004). These conditions imply new forms of sovereignty and political economy. This is not to say that the commons has not historically potentiated capitalist accumulation, but that we are witnessing a dramatic intensification of these conditions. In turn we are faced with a number of questions: through what proprietary mechanisms and juridical processes is the digital commons enclosed? How, in turn, is surplus value extracted from the digital commons – through what technological apparatuses, property regimes and composition of capital? Finally, what political and economic possibilities might emerge alongside the hegemony of the commons?

This paper will explore how recent controversies surrounding the management of electromagnetic spectrum provide insights into the composition of contemporary capitalism. As the communications channel for all mobile and wireless transmissions, electromagnetic spectrum is a core apparatus in the digital economy; its enclosure is part and parcel of the techniques that facilitate capitalist accumulation through production over wireless and mobile networks. This discussion proceeds in two parts: First, the history of spectrum regulation provides an account of the expropriation of communicative and cooperative capacities through the dispossession, deregulation and progressive rarefaction of a common resource. As mobile data grows exponentially, however, we are witnessing changes to the ways in which this resource is managed, with many calling for a greater communality of the radio spectrum in response to perceived scarcity in mobile bandwidth. The second part of the paper explores these emergent conditions. On one hand, it appears as though antagonisms between openness and enclosure in information capitalism prefigure a crisis in property relations that potentiate possible forms of anti-capitalist ‘exploit’ (Galloway and Thacker, 2007). On the other, it is also possible that capitalist accumulation is becoming ever more tightly organised through highly fluid and distributed mechanisms that route, not only around a direct intervention in production, but increasingly around the old property regimes.

The aims of such a study are reflexive. If the burgeoning political vocabulary of the ‘communism of capital’ offers a critical insight into the enclosure of the digital commons, spectrum management also provides an empirical case to reflect on the theoretical underpinnings of this vocabulary. For example, much of this theory not only acknowledges correspondence between forms of the commons with capitalist accumulation, it also identifies a number of contradictions in such an alliance, whether through the socialisation of production or through the imminent crisis of an underlying proprietary logic. This paper explores how the production of artificial scarcity around electromagnetic spectrum, when situated against the growing demand for a greater fluidity of network resources, provides a lens for what are perceived to be the irreconcilable elements of the communism of capital. Does a shift towards non-proprietary and unlicensed infrastructure represent an antagonistic or subversive element in the communism of capital? Or, if this communality of resources is not at odds with capitalist interests, how is it that an acquisitive logic continues to act?

The communism of capital

Today we are witnessing the reconfiguration of pre-capitalist forms of social coordination in the computational-informational space. This includes a range of nonmarket and non-proprietary activities such as open source software and open standards, peer-to-peer economies, and distributed forms of production over networks. As the informational network migrates from a traditional desktop model, becoming invested in everyday spaces through mobile and pervasive platforms, such activities are thought to be capable of inflecting not only social and juridical processes, but material economies (Rheingold, 2002; Kluitenberg, 2007). This ideology of the digital commons has many advocates in both the communities of digital activism and the core apparatuses of neoliberal power.

Traditional economic theories and the new schemes proposed by the advocates of the digital commons provide only a partial understanding of this burgeoning economy. Proceeding from a dialectical perspective, the range of cooperative activities taking place over digital networks appear to transcend the traditional enclosures of capital, operating over gift economies and forms of social capital. At the same time, recent conditions point to a conflictive terrain in which these very activities emerge at the centre of the valorisation process. Such conflicts include the growing centrality of open source to the corporate value chain and the new streams of revenue based around user-generated content. Specular to these activities are the new enclosures applied over communications ‘infrastructure’ such as bandwidth, consumer devices and network architectures. This is not to say that value is not communally held and produced, but that the apparatuses that leverage its extraction are not held in common. The combination of these two circumstances is significant, transforming the qualities of both. On one hand, the commons moves from a pre-capitalist legacy towards the centre of the market, and on the other, the value of property becomes less a question of a rent over infrastructure alone, and more one of leveraging a title to extract value from commons-based peer-production (O’Dwyer and Doyle, 2012). The traditional dichotomies of socialism vs. capitalism or property vs. the commons would not seem adequate to sketch such a system.

Recent critical activity is about learning a new political vocabulary to attend to these conditions. Post-Operaismo theorists have sketched an outline of the fundamental transformations underlying Post-Fordist capitalism (Virno, 2004; Marazzi, 2007; Hardt and Negri, 2009; Hardt, 2010; Vercellone, 2010). These include changes to the conditions and products of capitalist accumulation, structural alterations to the property relations under which labour produces and changes to the technical composition of labour (Hardt, 2010). A full rehearsal of these is beyond the scope of this paper, but as they relate to the digital commons they include:

- A shift from the hegemony of material goods to immaterial goods such as knowledge, cultural capital and social/affective relations. Though material goods like cars and houses continue to play a significant role in the economy, these are supplemented by a range of commodities previously cast as external to the market, and typically held and produced in common.

- Transformations from productive capital and strict property regimes typical of the industrial era towards the parasitic extraction of rent over common outputs.

- Consequentially new models of labour have also come to the fore. In the context of the network economy, waged labour and capitalist intervention in production is replaced by ‘precarity’ and a variety of automated apparatuses for the extraction of surplus. (Virno, 2004)

It should be clear that the key to understanding economic production today lies with the commons. Capitalism needs the commons and consequently a range of systems to regulate and enclose its products. Where once these enclosures operated over land, today they operate over the entirety of human knowledge. We witness this where neoliberal enterprise converges on the natural resources and productive capacities of societies. The extraction of tertiary outputs, the rent extracted by real estate from local cultural injections and the enclosure of local knowledge under intellectual property regimes are key instances of this process.

Hardt and Negri (2009) outline two different types of commons: firstly, the natural, describing material and finite resources such as common land, agricultural and mineral resources and, secondly, the cultural or ‘artificial’ commons, describing intangible products such as common knowledge, language and shared culture. While this second commons still operates through very material channels, their outputs may not be subject to the same logics of scarcity as a natural resource. In turn the range of different forms of the commons are also subject to different forms of enclosure and systems of accumulation. In an information economy, it is readily accepted that a degree of freedom is essential to productivity, where access to common knowledge, codes and standards are essential for innovation and economic growth. Privatisation through intellectual property or other forms of enclosure destroys the productive potential of the commons. In the communism of capital, therefore, and particularly in the digital commons, we increasingly encounter a condition that inverts the standard narrative of economic freedom, where openness as opposed to private control is the locus of accumulation (Von Hippel, 2005). Examples of this include the commercial development of Android, an ‘open’ and ‘free’ mobile platform by the Open Handset Alliance or the role of open source systems such as Linux to IT corporations like IBM.

All that said, an economy centred on the reproduction and distribution of digital commodities must still account for their translation into exchange value, which occurs outside of the commons (Pasquinelli, 2008). The digital commons stands against private control exerted by property, legal structures and market forces, and yet these economic barriers prevail in the substrate of the system, regulated by a temporary monopoly of exploitation conferred by licenses, patents, trademarks and copyright, capturing value before the true potential of the commons can be realised.

The digital commons is traditionally framed in a tiered structure that echoes the models commonly employed by network architecture[1]. Different layers of contingent logical and physical strata form an assemblage concerned with the interoperation of terminal devices and the circulation of content through communication channels. This network comprises the content itself and the layers of software-defined protocols that proceed from the user down to the physical resources underpinning the network: storage and processing technologies, terminal devices, transmitters, routers, spectrum, real estate, man power and energy. Together these form the substrate architecture over which the digital commons is produced. New streams of value are increasingly identified within this space, from the transmissions channels that form part of the telecommunications value chain, through to the attention economy that underscores monopolies such as Google and Facebook. Rights governing access to communications are at the heart of this economy, as the core infrastructure that underscores digital labour. Any reforms, therefore, need to look to the architectures that flank the digital commons, to the policies, property regimes, protocols and technological standards that structure this conflictive space[2]. This paper explores the property regimes surrounding the underlying architecture of mobile and wireless networks – electromagnetic spectrum.

Electromagnetic spectrum: An overview

The political economy of mobile media involves a network of devices and core, backhaul and radio access infrastructure. As the communications channel for all ‘radio’ transmissions, the electromagnetic spectrum is a core component in this system. The enclosure of spectrum within exclusive usage rights, property regimes and market dynamics, therefore, forms part of the technological composition of cognitive capitalism[3] (Moulier-Boutang, 2012).

But what exactly is spectrum? Albert Einstein, when asked to explain radio, is reported to have replied:

You see, wire telegraph is a kind of very, very long cat. You pull his tail in New York and his head is meowing in Los Angeles. Do you understand this? And radio operates exactly the same way: you send signals here, they receive them there. The only difference is there is no cat. (Einstein, cited in Werbach, 2004: 14)

Figure 1: Spectrum usage.[4]

In any wireless communications system there are a variety of radio devices: transmitters and receivers, and the electromagnetic waves that pass between them. Radio technologies involve the transmission of signals encoded in these electromagnetic waves in the same way a fixed network involves the transmission of messages through copper or fibre-optic cables. The term ‘radio spectrum’ references electromagnetic waves that traverse space with a frequency range between 3,000 and 400 billion cycles per second[5]. These waves provide the necessary channel through which messages propagate. All wireless communications, from radio and television transmissions, wireless networks, through to cellular technologies, personal networking devices and domestic radio appliances, rely on electromagnetic radiation within this frequency range for the circulation of data. The propagation characteristics of radio waves – specifically how they traverse space and interact with physical objects – make some frequencies more desirable conduits than others. The frequencies most suitable for commercial applications are typically those between 300 MHz and 3,000 GHz, in which television broadcasting, cellular services such as GSM and 3G, Wi-Fi and Bluetooth take place. These frequencies are attractive because antenna size is reasonable and the radio waves are of a dimension that is less susceptible to corruption by high rise infrastructure or mountainous terrain.

Spectrum is ‘spectral’. Its incorporeal and invisible qualities relegate it to something resembling the fluid medium of the vistorian ether – an amorphous substance through which messages mysteriously propagate. However, the fact that radio waves have a physical dimension that interacts with surrounding matter and, furthermore, that these waves play a central role in the information econmy, makes spectrum material, both as network infrastructure and as a resource with an accelerating market value. In this way, spectrum echoes many of the properties of informational products in its seeming intangibility and lack of physical degradation, while at the same time belonging to the material world of radio devices that are rivalrous and subject to constraints regarding how they interact and negotiate interference.

This conceptual ambiguity, as we will come to see, has made governance of the electromagnetic spectrum a difficult issue, where regulatory debates surrounding the accurate modelling of use, occupancy, interference or scarcity often appeal to conceptual metaphors to perform political work. At the same time this material/immaterial ambiguity also makes electromagnetic spectrum and the legacy of its management an ideal lens for the digital commons. Rather than positing an immaterial realm of production that is fundamentally separate to the material economy, spectrum controversies go a long way to demonstrating the confluence of immaterial and material forces and relations of production in the digital domain. This is to say not only that communication proceeds along material and energetic channels, but that these networks involve highly confluent arrangements of contradictory strata, at one level freely reproducible and held in common and at another finite, rarefied and consolidated in property. Recent debates around spectrum management, therefore, problematise many of the normative assumptions about the digital commons and highlight many of the conflicts between the informational flows of a digital economy and its machinic underbelly, which is to say between cognitive and industrial forms of capitalism.

Spectrum’s economic value is based on the right to build wireless communications infrastructure and the possibility to leverage networks, services and commodities upon that infrastructure (Forge et al., 2012). At the heart of this value is the communicative, cognitive and cooperative capacities of a network of users (Manzerolle, 2010). Exclusive control over and access to these capacities is central to the accumulation strategies of cognitive capitalism; it plays an integral role in the expropriation of surplus from the digital commons. As computation increasingly migrates to mobile and pervasive environments, reliant on spectrum-based technologies, this is increasingly so.

The role of spectrum has expanded over the past decade. In the twentieth century, non-federal spectrum was central to broadcast media such as public radio and television. Political economist of communications Dallas Smythe (2001) argued that control of these electromagnetic channels was a locus for value accrued through an attention economy over media audiences. Referred to as the ‘audience commodity’, it was the main commodity produced by any media form that earned its primary revenue from advertisers. Today this relation is intensified in keeping with Christian Fuch’s extension of Smythe’s theory towards the ‘prosumer commodity’ (2010), referring to surplus produced through the consumption, production and distribution of cultural capital over multicast networks such as the Internet. This does not signify a democratisation of media, but the total commodification of human creativity. In turn we can trace a correspondent intensification of the technical assemblages that facilitate this extraction. Contemporary spectrum-orientated networks pervade spaces and biologies, not just through the recent influx of smart phones and tablets, but through ambient sensor networks, meshes, smart grids and even microscopic sensing systems[6], all of which rely on electromagnetic waves for transmission. As a result, control of the electromagnetic spectrum today facilitates the extraction of value across the whole range of human subjectivity, expanding and networking previously diverse forms of social production. Through mobile media we encounter not only the progressive fluidity of labour and social space, but the dynamic extraction of everyday demographic, psychographic, relational, locative and even biometric data from mobile consumers[7]. Such intense activities are reliant on a range of next generation high speed architectures for mobile broadband such as 3G. 4G, LTE and LTE Advanced. This currently represents an exponential demand for mobile bandwidth that is reflected both in the astronomical prices currently paid by incumbents for frequency assignments[8] and in predictions of a global spectrum deficit as early as 2013 (Higginbotham, 2010).

The history of the radio spectrum is emblematic of a process through which common communicative capacities were progressively enclosed within various property regimes. Since the first radio acts, spectrum has been consolidated in a command and control framework under the guardianship of a national regulatory authority. Regulatory frameworks are broadly dictated by the International Telecommunications Union (ITU), a UN organisation that intercedes with the national regulatory authorities of various territories to define a global standard of allocation.Where ‘allocation’ refers to the partitioning of bands of frequencies to specific applications such as radio, television or cellular networks, each regulator is responsible for further ‘assignment’, referring to the attribution of licenses to service providers within each allocated frequency band. These assignments are determined through comparitive hearings or competitive auctions. Licenses confer exclusive usage of a band of frequencies in a given geographic territory to an incumbent. This provides the holder of the license with the right to build mobile and wireless infrastructure and/or to implement wireless transmissions for services such as television and radio, cellular communications and the mobile internet. Due to the technical and juridical consolidation of these licenses (which will be discussed in more detail shortly), rights to spectrum are consolidated with powerful incumbents such as mobile network operators, Internet sevice providers and public service broadcasters who can afford to invest in expensive, long term and large scale infrastructures.

While the majority of spectrum is consolidated in exclusive usage, a small range of frequencies, such as the 2.4 GHz band, have remained unlicensed for common use. This means that anybody can build and transmit in these frequencies, provided they adhere to certain regulations. This unlicensed spectrum has given rise to hugely successful protocols such as Wi-Fi, Bluetooth and Zigbee, but it is also subject to regulatory constraints that restrict the scale of nonmarket and non-proprietary networks. Not only does unlicensed spectrum comprise a very small frequency band, it is also governed by power-transmit rules that constrain wave propagation to within a very limited geographic radius. Any infrastructure that intends to scale and provide coverage over a wide area or to a large community requires access to spectrum that is licensed and auctioned on a scale that suits powerful commercial entities. Ownership and control of spectrum, therefore, confers economic power to incumbents, and in turn not having possession or rights to this resource is a major constraint to the development of a common communications infrastructure[9].

Despite the prevailing belief that the radio waves constitute a ‘public good’ held in trust by National Regulatory Authorities such as the FCC or OFCOM, the reality is that this supposedly public resource is consolidated in ways that favour the media and communications industry. These powerful incumbents treat licences more or less like property, the market value of which is clearly reflected when such corporations are valued. Despite its status as a public good, licenses are arguably circulated without direct benefit to the public. Instead, this revenue is extracted through rent by powerful corporations and institutions that succeeded in privatising the commons.

The becoming-rent of profit

The communism of capital is characterised by a return and proliferation of forms of rent (Vercellone, 2010). Rent is the revenue that can be extracted from exclusive ownership of a resource, where value is contingent on its availability with respect to demand (Harvey, 2001). Industrial capitalism concerned direct intervention in the production process, and subsequently in the generation of profit. In industrial capitalism, therefore, rent is characterised as external to production and distinct from profit. Industrial capitalism constituted a shifting emphasis from immobile to movable property, corresponding to a shift from primitive accumulation towards profit. Rent was largely understood as a pre-capitalist legacy, traditionally associated with immobile forms of property such as land. Where ‘rent’ is the primary locus of value, the rentier is thought to be external to the production of value, merely extracting the economic rent produced by other means. The generation of profit, in contrast, requires the direct intervention of the capitalist in the production and circulation of material commodities. It is associated with the ability to generate and extract surplus (Vercellone, 2008, 2010). This transformation from rent to profit, many theorists argue, is emblematic of a passage from primitive accumulation to capitalist productive power in industrial capitalism (Hardt, 2010). In contrast, capitalist accumulation is today characterised by a shift from the productive forms of capitalism that characterised the industrial era towards new modalities in which rent is no longer cast in opposition to profit. Through the growing role of property in extracting value from a position external to production, and the manipulation of the social and political environment in which economic activities occur, such as the management of scarcity and the increasingly speculative nature of capital itself, the core tenets of ‘rent’ are confused with ‘profit’. This is described in the Post-Operaismo theory of the ‘becoming-rent of profit’, an economic theory specular to the communism of capital.

Rent, as Pasquinelli (2008) maintains, is the flipside of the commons. Through the rent applied over proprietary frameworks that flank the digital commons, the material surplus of immaterial labour is opened to extraction. Spectrum, in this case, like a monopoly over knowledge, decision engines, storage or processing capacities, provides the owner of that informational resource with the opportunity to leverage this property in order to extract value from a position external to its production. Where wireless transmissions are concerned, underpinning this process is the reification and subsequent rarefaction of radio signals – the commodification of electromagnetic transmissions followed by progressive arguments for the necessity of institutional regulation, first through state bodies, and later, increasingly through enterprise.

The becoming-rent of profit: Enclosure

Enclosure of the digital commons operates through the dual processes of dispossession and deregulation of these architectures (Dyer-Witheford, 1999; Hardt and Negri, 2009). To secure cooperation, capital must first appropriate the communicative capacities of the labour force. Common tools are appropriated and filtered through administrative channels, at which point they are once again distributed as part of the services capital must deliver to the labour force in order to ensure its ongoing development. But how does enclosure operate over something as intangible as electromagnetic spectrum? Throughout the history of radio communications, a variety of apparatuses that perform this enclosure can be identified, at turns semantic, technical and juridical.

In a wireless communications network there are radio devices and electromagnetic waves that pass signals between these. In information theory, this inter-device relationship is referred to as a ‘channel’. It is contingent; it does not exist independently of these technological interactions. In other words, radio signals do not traverse an immaterial medium redolent of the Victorian ether or ‘the spectrum’. They are the medium (Werbach, 2004). Nonetheless, spectrum is almost universally treated as a spatial rather than a relational artefact, where frequency is equivalent to geographic territory, signals are phenomena that traverse this space and radios are agents operating in this territory. The slightly more difficult to envisage reality, according to de Vries, is closer to a distribution of related entities that range over a set of values, such as, in its current management, radio energy indexed by frequency (de Vries, 2008).

Are we not simply dealing with space in a fourth dimension? Having reduced space to private ownership in three dimensions should we not also leave the wavelengths open to private exploitation, vesting title to the waves according to priority of discovery and occupation? (Childs, 1924)

Spectrum as ‘land’ is a conceptual metaphor that over time comes to operate as an empirical truth. We speak of spectrum as ‘occupied’ or ‘fallow’, of licensed spectrum as ‘private property’ and unlicensed as ‘the commons’. Conceptual metaphors are useful to make abstract concepts intellectually concrete (Lakoff and Johnson, 2003), but there is more at play than a necessary disambiguation; they normalise certain relations, crystallise habits of thought and discourage others. In this case, ‘the spectrum’ as a geographic trope performs an integral function in the enclosure of the commons. At the heart of this commodity is a social relation (Lukács, 1967). It draws the fluid relations between agents into a material domain where, to echo Lukács, ‘they acquire a new objectivity, a new substantiality which they did not possess in an age of episodic exchange’ (1971: 92)[10].

Another metaphor that structures the radio space is that of ‘interference’. If electromagnetic spectrum is a territory, the metaphor of interference is used to describe an ‘inevitable’ tragedy of the commons (Hardin, 1968) scenario, whereby the confluence of competing signals within that territory results in an intolerable signal-to-noise ratio. It is generally equated with the over-population of that space by transmitting devices and in turn is the primary rationale both in favour of the enclosure of frequencies within property rights and against proposals for a spectrum commons. It is more accurate to say that interference is the effect of unwanted energy on a radio receiver, degrading its performance or causing information loss from an intended signal. In other words, we are not speaking about a fundamental competition of the waves themselves but about the inability of a radio receiver to extract meaningful information. Interference, therefore, is highly contingent and cannot be defined outside the specifications of a technical system. It is far more accurate to say that different material or juridical arrangements, rather than simply the overpopulation of the airwaves, produces this condition[11].

Over time conceptual and material arrangements solidify and reinforce each other in the regulation of spectrum. In the 1927 US radio act, for example, the airwaves were declared ‘public property’ and put under the guardianship of the Federal Radio Commission, later to become the Federal Communications Commission (FCC), which was given the subsequent authority to issue temporary licenses to those who were felt to broadcast ‘in the service of public interest, convenience and necessity’ (Marcus, 2004). To this day, licenses or channel assignments regulate the frequency at which a license holder can transmit, the signal strength of the transmissions as an index of wave propagation, the geographic territory, technical specifications and the designated service to be provided. Despite the availability of a variety of techniques for dynamic spectrum access[12] as early as the 1940s, regulation maintains exclusive forms of licensing on a frequency index. In effect, these technical and juridical specifications solidify a semantic enclosure; they produce spectrum as an excludable resource.

It is worth noting that while such licenses ceded exclusive control of a frequency block to a service provider within a given geographic region, this claim did not yet constitute an inalienable property right. However, the twentieth century chronicles not only the reification and subsequent rarefaction of spectrum, but the gradual justification of enterprise in favour of state control of this ‘public good’. By the 1950s, key economists were making persuasive arguments for the use of market forces to distribute trasmit rights (Herzel, 1951; Coase, 1959). The introduction of market forces proposes that instead of management through a state-defined regulatory body, spectrum should be bought or sold like any other commodity, with governments issuing not only licenses but property rights that corporations could trade, combine or otherwise modify (Coase, 1959; Hazlett and Leo, 2010). Auctions would be used to assign and efficiently distribute these rights. Economic, as opposed to regulatory decisions, these econonomists argued, would help to direct communications to where they delivered the highest social gains (Coase, 1959). The neoliberal argument at play claims that market-based solutions are inherently more socially valuable, internalising the digital commons within the context of privatisation in much the same way that public parks may be provisioned within the context of private real estate. Since their introduction in the nineties, auctions have played an increasing role in spectrum policy in both the US and Europe and achieved significant revenue through the sale of prime spectrum ‘real estate’ for next-generation mobile networks (Thomas, 2012).

The becoming-rent of profit: The production of scarcity

Beyond the enclosure of the commons, the survival of exchange value is increasingly contingent on the destruction of non-renewable scarce resources and/or the creation of an artificial scarcity where these goods are by nature non-rival and reproducible. Enclosure and scarcity go hand in hand; there is no chronology as such. The extraction of rent is dynamic and these elements, which are separated for clarity in this paper, are in reality entangled, imbricated and mutually enforcing.

According to Vercellone (2010), resources on which rentier appropriation is based today do not tend to increase with rent; indeed they do exactly the opposite. To quote Napoleoni’s (1956) definition, rent is ‘the revenue that the owner of certain goods receives as a consequence of the fact that these goods, are, or become, available in scarce quantities’ (quoted in Vercellone, 2010: 95). Rent is thus linked to the artificial scarcity of a resource, and to a logic of rarefaction, as in the case of monopolies. Rent, therefore, leverages monopolistic or oligopolistic forms of property, and positions of political power that facilitate the manufacture of scarcity. Scarcity in the digital commons is induced by a variety of juridical artefacts such as intellectual property or digital rights management in the case of digital content, and through a combination of rhetorical devices and technological or juridical regulations in the case of electromagnetic spectrum.

There has been wide ranging controversy surrounding the scarcity of spectrum in recent years, where growing predictions of a severe deficit in available spectrum intersect with criticisms concerning the inefficient management of this resource. Spectrum, many argue, rather than being a naturally scarce resource, has been ‘managed into scarcity’ by rent-seeking activities that frame episodic restrictions as permanent barriers (Werbach, 2011; Forge et al., 2012). Theoretically, limitations to bandwidth do exist, but the previous use of an electromagnetic wave as a channel does not impact the fidelity of future transmissions. In this sense, spectrum can be defined as a perfectly renewable resource (Benkler, 2004) but just as easily framed or managed as a rival good. The current spectrum deficit can be largely attributed, not to any endemic scarcity of the radio waves themselves, but to an economic landscape that privileges exclusive usage rights over shared and unlicensed allocations[13].

In this sense, the definition of a useful passage is always dependent on the threshold constraints of available knowledge, technology and legislation (Sandvig, 2006). Due to constraints on the technologies and expertise when wireless communications were first implemented, for example, the possibility for multiple transmissions and managing competing signals was fairly limited, and early techniques favoured exclusive access. However, following Cooper’s law[14], wireless capacity is thought to have increased one trillion times since 1901 (Marcus, 2004). The development of a variety of non-exclusive techniques in subsequent years from spread spectrum[15], to new forms of digital signal processing and modulation, directional antennas[16], and various forms of cognitive and software defined radio[17], reconfigures the geography of enablement and constraint. Though frequency specific receivers produce a rival, excludable and scarce resource, other radio techniques permit a variety of cooperative negotiations between devices transmitting in the same frequency band. These pose a significant challenge to an ideological construct that treats spectrum as a rivalrous good. While some of these techniques are already implemented in the small available unlicensed domains such as the 2.4 GHz band, political lobbying by powerful incumbents, and legacy regulations from state bodies, mean they are still prohibited in licensed spectrum. Current policy continues to give precedence to a signal processing technique that supports exclusive ownership, prohibiting the exercise of techniques that contest exclusive use.

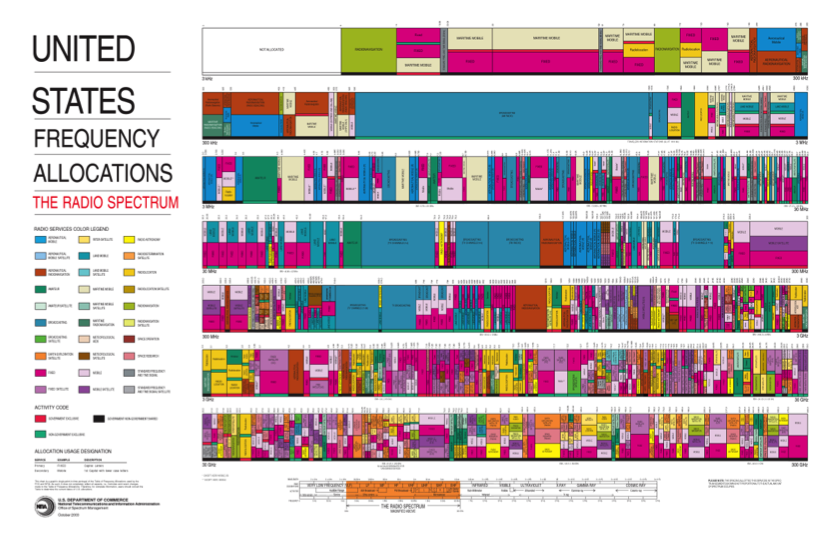

If scarcity is produced through frequency specific licensing, it is further consolidated through the scale of these assignments. Current allocation divides spectrum into large blocks which are assigned on a regional or nationwide basis. These frequency channels are in turn flanked by ‘guard bands’ – empty margins around active frequency domains designed to prevent possible interference between proximate operators. This practice not only cedes control to economically powerful actors who can afford to invest in this kind of scale, but the geographic extent of current allocation techniques, coupled with highly

Figure 2: FCC Frequency allocation chart.[18]

conservative margins of unutilised bandwidth, is apt to produce an excess capacity that is left to accrete as rent on the resource (Benkler, 2004)[19].

Finally, scarcity is also performed through informational databases. An examination of the static frequency allocation charts of any first world country shows electromagnetic spectrum to be heavily occupied (FCC, 2012b; Ofcom, 2010). Such databases, however, do not take into account ongoing utilisation, only allocation and assignment. Secondly, in more dynamic tables such as geolocation databases, activity is often determined by highly conservative wave propagation models that return a result of occupation in favour of the incumbent, when in reality this often fails to be the case (Marcus, 2010)[20].

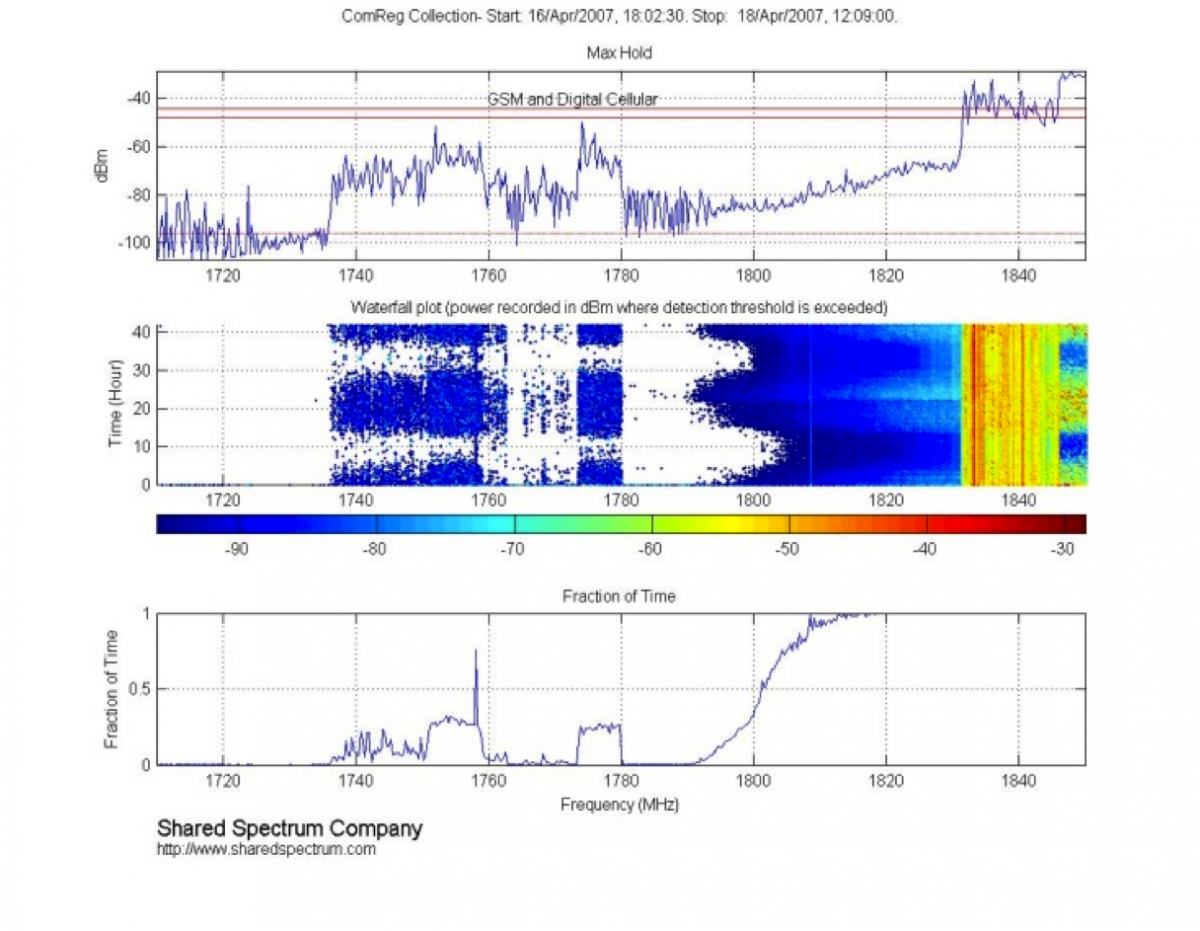

Figure 3: Shared Spectrum tests of spectrum utilisation in Dublin City Centre 16-18 April 2007.[21]

Scarcity in all of these instances results from the rarefaction of a resource and the rent-seeking architecture of a network economy that seeks returns for the owners of core infrastructure, and not, as is presumed, from the intangible constraints of the airwaves themselves. A significant number of studies comparing static tables of spectrum allocation with real-time activity return dramatically different results. Where reference to the FCC frequency allocation chart demonstrates high levels of scarcity and full utilisation, for example, current spectrum utilisation through dynamic sensing and measurement is estimated by myriad studies to be at best 17% in urban areas and 5% elsewhere (Ballon and Delaere, 2009; Forge et al., 2012).

Figure 4: Shared Spectrum tests of spectrum utilisation in Dublin City Centre 16-18 April 2007.[21]

Contradictions in the communism of capital

There are irreconcilable elements inherent to the communism of capital. These are sometimes presented as a contradiction between the productive nature of the capitalist, as a generator of new forms of wealth, and the parasitic character of the rentier. By exploring the communism of capital through the lens of spectrum regulation, however, it would appear that this condition is more nuanced. Hardt and Negri frame the centrality of the commons to capital as a metastable condition that will eventually exceed its boundaries and give way to the productive multitude, arguing that ‘the freedom required for biopolitical production also includes the power to construct social relationships and create autonomous social institutions’ (2009: 310). Here, the hegemony of the digital commons constitutes the provision of social tools and critical faculties required to mobilise the labour force. This perspective is echoed by advocates of free culture such as Benkler (2006), who understands the economic importance of cultural production as an emancipatory force and Rheingold (2002), who views pervasive media as a vital tool for political mobilisation. However, without a common infrastructure including an open physical layer, an open logical layer and an open content layer, such social and intellectual activity is still open to extraction. It is therefore worth looking beyond the ways in which the centrality of the digital commons cultivates social and cooperative capacities to how the hegemony of the commons inflects the property relations that underpin the substrate of the network. It is here that we encounter various structural antagonisms at operation in the expropriation of the digital commons. This is where the circulation of immaterial products – those ‘freely reproducible’ outputs of the digital commons – show their material and energetic expenditure. This is reflected not only in the productive power of minds and bodies, but in the storage and processing power, electricity, cooling resources and bandwidth required to support an immaterial economy of goods and services.

We are witnessing attempts to integrate an ‘immaterial’ surplus not easily subjected to proprietary logic into a progressive growth dynamic established on the forms of enclosure that conditioned accumulation in industrial capitalism. This produces antagonisms where the necessary openness of the digital commons intersects with attempts to establish economic barriers over the infrastructure that facilitates its production. In other words, where openness and fluidity are a necessary condition of the communism of capital, the ‘old’ property rights represent a structural impasse.

The spectrum commons

In the case of spectrum, one such antagonism concerns techniques that produce scarcity and prohibit access at precisely the moment when excess capacity is needed to support a growing knowledge economy. The telecommunications industry and associated regulatory authorities for spectrum now identify an imminent ‘spectrum crunch’ where current demand exceeds the capacities of the resource in its current arrangement. Mobile data traffic is now doubling every six months (Forge et al., 2012). According to the Organisation for Economic Cooperation and Development (OECD), the number of devices connected to mobile networks worldwide is around 5 billion today and could rise to 50 billion by 2020 (PCAST, 2012). Such astonishing growth in mobile media requires the rapid expansion of networks. This presents as not only a desire for bandwidth, but also a greater fluidity of infrastructure in response to rapid fluctuations in the network architecture. The previous forms of spectrum management – the command and control model of exclusive and permanent licensing – are anathema to these requirements.

The result is arguably a growing logics of diffusion that occurs, not only around information and cultural goods that are held and produced in common, but increasingly around those that are historically consolidated in industrial property regimes. Where monopoly (or oligopoly) control is an essential component of the extraction of rent (Harvey, 2001), structural contradictions at the heart of capital threaten this monopoly, causing it to break down, ceding exclusive control towards transient, fluid and shared models of ownership. We can see this reflected in emergent trends in telecommunications that are antagonistic to the necessary economic barriers for the expropriation of commons resources: a growth in modalities of sharing in physical infrastructure and the circulation and redistribution of once fixed resources in response to market fluctuations (O’Dwyer and Doyle, 2012). With spectrum, this is arguably reflected not only in the emergence of market forces that trade, re-farm and otherwise reapportion licensed spectrum, but in growing arguments in favour of unlicensed spectrum coupled with dynamic spectrum access techniques (Werbach, 2003; Cochrane, 2006; Forge et al., 2012; PCAST, 2012).

A number of factors favour an unlicensed approach to spectrum regulation: the exponential demand for mobile bandwidth, the huge success of innovations in the 2.4 GHz band and the development of a variety of non-exclusive techniques that make cooperative negotiation of the electromagnetic spectrum feasible. Today, the idea of shared spectrum has a currency beyond a core group of long time advocates of ‘open spectrum’ and commons infrastructure, emerging at the heart of neoliberal enterprise, with several high-profile reports published in 2012 recommending a paradigm shift from exclusive access to forms of shared, license exempt and non-exclusive regulation. The final report for the European Commission, for example, entitled ‘Perspectives on the Value of Shared Spectrum Access’ provides an outline of the socioeconomic value of the spectrum commons and responds to the ‘[European] commission’s recognition of the need to move away from exclusive and persistent channel assignments…reflected in a growing emphasis on shared spectrum access, which our findings support’ (Forge et al., 2012: 12). Published in early 2012, this document was influential on a subsequent report published by President Obama’s Council of Advisors on Science and Technology (PCAST) in July, entitled ‘Report to the President Realizing the Full Potential of Government-Held Spectrum to Spur Economic Growth’. The council includes Google chairman Eric Schmidt and Microsoft chief research and strategy officer Craig Mundie. The PCAST report, which proposes radical reform to the federal spectrum architecture, summarises that ‘the norm for spectrum use should be sharing, not exclusivity’ (2012: vi). Both reports represent significant policy reconfigurations and provide detailed recommendations for the implementation of a new spectrum architecture. This includes a greater fluidity in allocations; an increase in shared rather than exclusive channel assignments[22]; a significant increase in unlicensed spectrum;[23] and the introduction of cognitive radios and dynamic spectrum access techniques to realise these reforms[24]. These reports, while welcomed by many in the industry, have also lead to accusations of a creeping communism on behalf of the Obama administration (Brodkin, 2012)[25].

The new commons or the new enclosures?

The implications of these recommendations, which have yet to be implemented, are difficult to unpack. Here, a crisis of the old property relations places competing economic modalities in conflict. Their outcome is uncertain. It is as yet unclear if this represents a juncture in the communism of capital – the gradual dissolution of a logic of accumulation – or simply its reorganisation through ever more distributed channels.

On one hand, communality appears to inflect all layers of the network and undermine the necessary forms of enclosure that formed the conditions for the extraction of rent. PCAST, for example, proposes a transformation of the property rights governing licensed spectrum towards an ‘exclusive right to actual use, but not an exclusive right to preclude use by other…users’ (2012: 23). This removes some of the necessary conditions of enclosure and scarcity through which rent is extracted. Where rent is the central mode of extraction of the digital commons, dynamic spectrum access and/or an increase in unlicensed spectrum poses a direct sabotage to the rent applied over wireless infrastructure[26]; it seems to destabilise the proprietary channels necessary for the expropriation of the digital commons. Long term advocates of open spectrum argue that such transformations condition the growth and scale of community-owned networks that were previously constrained by the limitations applied to unlicensed spectrum (Forge et al., 2012). Not only an increase in unlicensed spectrum, but a greater fluidity and transience in licensing, is conducive to smaller scale operations, nonmarket collectives and less economically powerful actors. These transformations might gesture towards a decomposition of information capitalism towards its inherent contradictions, as recent transformations to the technological composition of capital destabilise the economy of infrastructure.

On the other hand, we can also identify two possibilities for the failure of an anti-capitalist spectrum commons. One occurs where shared spectrum is tentatively introduced, but various forms of political or market-based lobbying produce unfavourable conditions for a spectrum commons. These include highly conservative restrictions that constrain market adoption of the cognitive radios required for commons spectrum such as stringent power-transmit regulations, highly politicised databases and/or the use of conservative models in spectrum sensing architectures that favour powerful incumbents.

The second possibility is more unsettling. Early innovations would suggest that these new forms of accumulation produce no necessary contradistinction between ‘the commons’ and ‘the market’. As previously discussed, this alliance is already well observed at a content level, where open standards and open innovation[27] are the locus of production for software development and social media. From the PCAST and EC reports, it appears that this communality is beginning to inflect the physical layer also. This is confusing, because, in many ways, it appears as if accumulation in the communism of capital has largely been based on fragile alliances between the old enclosures of industrial capitalism and the new modes of extraction in cognitive capitalism. If these alliances break down – ceding to forms of the commons not only in digital content but in the proprietary infrastructures that previously facilitated its extraction – how is that an acquisitive logic might continue to act in the digital commons?

Caffentzis has written in detail about what he terms the Neoliberal ‘Plan B’ – the use of the tools of the commons by the Obama administration to ‘save’ Neoliberalism from itself (2010: 25). This casts the PCAST report in a different light. For Caffentzis, the appearance of seemingly collectivist, socialist and communist actions does not intend to proliferate a permanent commons, but instead to return the economy back to its pre-crisis state of minimal state intervention. The danger for the network information economy is that a public associates phrases such as ‘unlicensed’ or ‘commons’ with a liberalisation and/or decommodification of the radio spectrum where, very possibly, we are encountering a much more draconian form of enclosure dressed in the socialist garb of ‘the commons’.

Along with the more optimistic reports detailing shared access to spectrum are those outlining the new forms of regulation that would be appropriate to this commons (FCC, 2012a; CSMAC, 2012). These largely focus on the exercise of distributed forms of self-regulation through a networked system. Proposals brought by the US Commerce Department’s Spectrum Management Advisory Committee (CSMAC), for example, published recommendations for unlicensed spectrum in July 2012. These included the introduction of ‘tethered’ radio devices in all federal bands that might be opened for shared access and in all newly created unlicensed bands. The radio in question has a form of networked connectivity that allows it to negotiate spectrum access in a dynamic fashion, accessing locative information pertaining to frequencies that are occupied or off-limits in particular geographic territories. However, this connectivity also allows a device to be controlled and accessed remotely. This facilitates a shift from autonomous radios, to one in which some central authority has the power to remotely monitor or even switch off a consumer device. The CSMAC report discusses the possibility to de-activate devices that are deemed to be ‘noncompliant’ through ‘connected equipment that can be required to call home periodically, and take mitigation steps when interference occurs, including the possibility of automatic shut off or losing access to particular frequencies’ (2012: 3). While this noncompliance primarily relates to ‘interference’, the report also proposes further discussion of a motion concerning intentional interruption of a wireless service by government actors for the purpose of ensuring public safety and law enforcement (2012: 7). Secondly, CSMAC outlines the possibility to leverage the power of the network to report or inform on noncompliant devices, discussing the possibility to deputise these tethered consumer devices to report back violations by neighbouring devices. This is maintained through ‘The establishment of a voluntary clearing house website to leverage the power of crowd sourcing by creating a tool for consumers or government operators to file reports of interference to create a snapshot of where such incidents may be occurring and when’ (2012: 9). Finally, the report proposes the hegemony of this connected approach through the gradual phasing out of all unconnected devices, or restricting these to legacy bands of spectrum (2012: 8). Though not expressly outlined, this tethered system also produces the possibility for new forms not only of surveillance, but monetisation and billing of users.

Here, we encounter a commons with a new kind of networked enclosure. The frequency band becomes open, but various draconian interventions in the network architecture constrain access. It might seem, therefore, as though we are not witnessing a ‘disaccumulation’ of network infrastructure, but its reconfiguration along a new metrics of speed and diffusion. This is to say that capital might become ever more tightly woven through forms of decentralisation. This is particularly the case in an information economy where forms of networked media facilitate the automated monitoring, aggregation and control of distributed agents (Galloway, 2004). ‘Command and control’ no longer permanently resides in a regulatory authority, but moves about as desired.

Contrary to much of the theory on the communism of capital, which supposes an imminent crisis, it appears it is still possible to not only produce temporary alliances between industrial and cognitive capitalism, but to leverage new forms of enclosure over the top of an emerging accoumulation regime particular to the network economy.

This re-drawing of the boundaries of both the commons and the systems of enclosure is part of the unfolding management of the communism of capital.

[1] For examples see the OSI model or TCP/IP.

[2] Software studies, a burgeoning discipline that explores the sociopolitics of logical processes such as protocols, algorithms and automated management systems, has made a significant contribution to an understanding of how informational processes play a role in the valorisation process (Galloway, 2004; Lessig, 2006; Galloway and Thacker, 2007; Fuller, 2008). This is in turn complemented by broader discussions from medium theory, materialism and the political economy of communications (Kittler, 1995; Smythe, 2001; Fuchs, 2009, 2010, 2011). Finally a body of research, largely emerging from law, provides perspectives on the implementation of property rights through intellectual property (IP), digital rights management (DRM), communications policy and technological standards and legislation (Benkler, 1998, 2001, 2004, 2006; Werbach, 2004, 2011; Sandvig, 2006; de Vries, 2008).

[3] A form of capitalism centred around the accumulation of immaterial assets.

[4] Source: http://stakeholders.ofcom.org.uk/market-data-research/market-data/

communications-market-reports/cm05/overview05/spectrum/.

[5] This range is outside human visibility, but these waves are comprised of the same elements as the visible spectrum of colours - the portion of the spectrum that is visible to the human eye.

[6] ‘Smart dust’ is an emerging system of many tiny microelectromechanical sensing systems. These can be wirelessly networked and distributed over an area to extract intelligence.

[7] Take, for example the hugely popular Nike+ app. Nike+ applications for iPhone and Android allow runners to monitor workouts. This includes mapping and tracking runs, monitoring personal fitness, and logging and sharing workout results with a social network of other Nike+ users. The mobile application utilises location-based information such as GPS and local weather, social networking capabilities, demographic information, and habits of consumption such as running shoes purchased and preferred music playlists. Nike attribute a significant increase in their market share in sports clothing to the global success of this social media application (Swallow, 2011). Not only is the application designed to build a strong consumer base for Nike products, identified revenue streams also include the possibility to tailor products to the consumer through location based and highly personalised offers on the go. ‘Ultimately, we are about connecting with the consumer where they are’, says Nike’s Global Digital Brand and Innovation Director Jesse Stollak. ‘We started with notion that this was about publishing to them with the right message and at the right time. We’ve quickly evolved to a focus on conversations and engaging them to participate as opposed to using new media in traditional ways’ (ibid.).

[8] Since July 1994, The FCC have conducted 87 spectrum auctions, which raised over $60 billion for the US at the time of writing. The UK’s spectrum auction for 4G services is projected to raise between 3 and 4 billion in 2013 (Thomas, 2012).

[9] Take for example a number of well known community wireless initiatives such as OpenBTS and Village Telco. OpenBTS is a software based GSM access point that allows standard GSM compatible phones to place calls outside of an existing telecommunications network (Burgess, 2011). Using software and inexpensive Universal Software Radio Peripheral devices (USRPs) to replace the costly core infrastructure of the average mobile network, the developers have implemented a communications interface with a number of socially and politicially beneficial applications. These include not only provision of universal service in rural and indigenous areas where the cost of infrastructure is prohibitive, but furthermore, the provision of a decentralised communications infrastructure, deployable in disaster relief, or in political situations where the existing network is under sovereign jurisdiction (Grammatis, 2011). However, because of current proprietary spectrum licensing, the operation of an OpenBTS system anywhere in the GSM band is strictly prohibited (Song, 2011). Other attempts at common core infrastructure such as Village Telco utilise the unlicensed 2.4 GHz band to create wireless mesh netwoks that support low cost internet access and telephony. Though these may not be subject to the same ownership constraints operating in licensed spectrum, they are constrained in other significant ways by the geographic and power-transmit regulations surrounding unlicensed spectrum.

[10] As a counter example, ‘open spectrum’ advocates such as Robert Horvitz and David Reed have likened electromagnetic frequencies to colour (Weinberger, 2003). Where the visible spectrum of colour comprises those wavelengths that are small enough to be identifiable to the human eye, electromagnetic frequencies comprise those wavelengths that are too large to visually apprehend. In such a conceptual exercise, a title to a portion of the spectrum is similar to government privatisation of the colour red.

[11] Arguably the metaphor of electromagnetic frequencies as land and interference as a form of overpopulation are also artefacts of the techniques available at the time of the first radio acts. Early transmission techniques were unsophisticated and required exclusive usage of a frequency band by a transmitter for fidelity. However, despite the development of dynamic spectrum access techniques as early as the 1940s, this metaphor continues to operate in regulatory decisions to the present day. These metaphors cannot be justified by appeal to the technological geography alone, therefore.

[12] The term used to denote signal processing techniques that dynamically and intelligently utilise available spectrum at a number of different frequencies.

[13] Just as we can speak of spectrum as ‘managed into scarcity, advocates of dynamic spectrum access discuss alternative techniques through which this resource might be “managed into abundance”’ (Doyle, 2012).

[14] According to Martin Cooper, the quantity of available spectrum has grown at the same pace since Marconi’s first radio transmissions in 1895. The number of theoretically possible communications has doubled every 30 months. This fact has been dubbed ‘Cooper’s Law’.

[15] Spread spectrum describes techniques in which a signal generated in a particular bandwidth is deliberately spread across the frequency domain, resulting in a wider bandwidth.

[16] A directional antenna radiates greater power in one or more directions, allowing for greater performance and reduced interference from unwanted signals.

[17] A cognitive radio is an intelligent device that can dynamically adapt a range of operating parameters such as frequency of operation, power, modulation scheme, antenna beam pattern, battery usage and so on. These adaptations may occur either in specific predefined ways or through pattern recognition and computer-based learning from real world situations.

[19] Benkler has described the relationship between granularity and shareable resources (2004). Granularity is a concept that is used to describe the scale and depth at which a resource is normally provisioned. Large grained goods are those typically provisioned in increments that constrain individual access. Small grained goods – such as a personal computer in the first world – are provisioned on a scale that enables individual access. For Benkler, the granularity of spectrum contributes to scarcity because the smallest increment size not only constrains bottom-up access, it also almost guarantees excess capacity in most contexts.

[20] Recently certain national regulatory bodies are looking to make use of geolocation databases to enable access to ‘TV white spaces’. These are frequency blocks of spectrum that have become available in the global switch from analogue to digital television. Where analogue television required more spectrum for transmission and produced greater interference, digital broadcasting is more spectrum efficient, freeing up spectrum in the highly desirable 700MHz range. Recent legislations include a number of protocols for access to this spectrum, such as listen-before-talk combined with a dynamic reference to geolocation with database lookup, where a transmitting device refers to a database to determine whether a frequency is currently in use at a particular geographic location. The current databases, however, make use of a wave propagation model, as opposed to a dynamic measurement approach, to determine whether spectrum is ‘available’. This model calculates whether certain frequencies are available in a specific location by measuring their strength relative to the distance from the radio. The model currently employed, according to Marcus (2010), does not take adequate account of signal attenuation, returning a result of occupation when in reality this often fails to be the case. The data model, he argues, is engineered to be highly conservative, and prohibit access by unlicensed users in favour of the licensed incumbent.

[21] Source: http://www.sharedspectrum.com. These images are measurements taken by a company called Shared Spectrum on behalf of the Centre for Telecommunications Research (CTVR), Trinity College Dublin in 2007. They show spectrum occupancy on the 16-18th April 2007. 40 hours of measurements are shown. Such measurements are site specific and similar plots exist for the USA and UK also performed by Shared Spectrum. This one shows measurements of spectrum use in Dublin City Centre but the measurements shown are indicative of the kind of pictures Shared Spectrum found in many different locations across the USA and other places in which they conducted measurements.

[22] Fluidity of licenses: Both reports recommend a shift from exclusive assignment and allocation of licenses in favour of various modalities of shared assignment in which channels are occupied by multiple users. This ranges from various forms of unlicensed access to licensed but underutilised spectrum in federal and non-federal bands, through to the removal of licensed bands altogether in favour of commons spectrum[22]. Licenses themselves also become more fluid, operating across different time-frames, permissions and territories and facilitating access to spectrum on both an episodic and a spatially modest scale. Finally, regulations would be lighter – built around the assumption that anything not explicitly forbidden is permitted, as opposed to the legacy principle that everything is forbidden beyond what is expressly permitted by the regulatory body.

[23] An increase in unlicensed spectrum: This indicates forms of sharing without channel assignments and with neutral access to all users. Reports propose a significant increase in license exempt spectrum through the allocation of TV White Space and through the clearing of underutilised federal and non-federal bands. PCAST as an initial test bed call for 1000 MHz of federal spectrum. Similarly the EC report calls for the creation of two new swathes of license exempt spectrum in the UHF regions above and below 1GHz in the order of 40-50MHz each.

[24] The use of cognitive radio and dynamic spectrum access techniques: The implementation of various forms of sharing and spectrum commons are reliant on intelligent devices as opposed to a central authority for their management. Reports recommend the use of available and emerging dynamic spectrum access techniques to manage cooperation between devices. These include the use of spread spectrum in which a signal is spread in the frequency domain; ultra wideband, where signals are underlaid across a band of frequencies at very low power close to the noise floor; opportunistic cognitive-sensing-based channel access where software defined radios sense activity in a band and respond accordingly and a variety of networked and context-aware radios with access to geolocation databases that provide information about available spectrum in a geographic location.

[25] Reader comments in response to Jon Brodkin’s article ‘Bold plan: opening 1,000 MHz of federal spectrum to Wi-Fi-style sharing’ (2012) [http://arstechnica.com/information-technology/2012/07/bold-plan-opening-1000-mhz-of-federal-spectrum-to-wifi-style-sharing/?comments=1#comme....

[26] Notably, the metaphor of ‘squatting’ is sometimes used in situations where licensed spectrum is made available to unlicensed users through dynamic spectrum access, directly referencing the economically disruptive aspects of this technique (Doyle, 2009, Doyle, 2011 the mobile phones of the future).

[27] See Living Labs http://www.openlivinglabs.eu/ or the Open Handset Alliance http://www.openhandsetalliance.com/.

Ballon, P. and S. Delaere (2009) ‘Flexible spectrum and future business models for the mobile industry’, Telematics and Informatics, (26): 249-258.

Benkler, Y. (1998) ‘Overcoming agoraphobia: Building the commons of the digitally networked environment’, Harvard Journal of Law and Technology, (287): 1-113.

Benkler, Y. (2001) ‘Property, commons and the first amendment: Towards a core commons infrastructure’, white paper for the first amendment program Brennan Centre for Justice at NYU School of Law, New York, United States of America, March.

Benkler, Y. (2004) ‘Sharing nicely: On shareable goods and the emergence of sharing as a modality of economic production’, The Yale Law Journal, 114(273): 275-359.

Benkler, Y. (2006) The wealth of networks: How social production transforms markets and freedom. London: Yale University Press.

Brodkin, J. (2012) ‘Bold plan: Opening 1,000 MHz of federal spectrum to Wi-Fi-style sharing’ article posted 20-07-2012 at 9:33pm to arstechnica. [http://arstechnica.com/information-technology/2012/07/bold-plan-opening-1000-mhz-of-federal-spectrum-to-wifi-style-sharing/].

Burgess, D. (2011) ‘David Burgess on OpenBTS – A DIY Air-Interface!’ Skype interview with L. Dryburgh at Emerging Communications Conference, San Francisco, United States of America, June 27-29.

Caffentzis, G. (2010) ‘The future of “the commons”: Neoliberalism’s “plan B” or the original disaccumulation of capital?’, New Formations, 69: 23-41.

Childs, W.W. (1924) ‘Problems in the radio industry’, The American Economic Review, 14(3): 520-523.

Coase, R. (1959) ‘The Federal Communications Commission’, Journal of Law and Economics, 2(1): 1-40.

Cochrane, P. (2006) ‘The future of regulation – not’, in E. Richards, R. Foster and T. Kiedrowski (eds.) Communications: The next decade. London: Ofcom.

Commerce Spectrum Management Advisory Committee (CSMAC) (2012) ‘CSMAC unlicensed subcommittee final report, 24 July 2012. [http://www.ntia.doc.gov/files/ntia/publications/unlicensed_subcommittee_finalreport072420122.pdf].

Communication from the Commission to the European Parliament, the Council, the European Economic and Social Committee and the Committee of Regions (2012), ‘Promoting the shared use of radio spectrum resources in the internal market’, European Commission Report, 3 September 2012. [http://ec.europa.eu/information_society/policy/ecomm/radio_spectrum/_document_storage/com/com-ssa.pdf].

De Vries, P.J. (2008) ‘De-situating spectrum: Rethinking radio policy using non-spatial metaphors’, New Frontiers in Dynamic Spectrum Access, IEEE: 1-5.

Doyle, L.E. (2009) Essentials of cognitive radio. London: Cambridge University Press.

Doyle, L.E. (2011) ‘The mobile phones of the future’. [http://ledoyle.files.wordpress.com/2011/04/future_of_the_phone_april_20111.pdf].

Doyle, L.E. (2012) ‘PCAST: Re-casting the future’ blog post on 27-07-2012 to Linda Doyle: Research, ideas and random thoughts. [http://ledoyle.wordpress.com/2012/07/27/pcast-re-casting-the-future/].

Dyer-Witheford, N. (1999) Cyber-Marx: Cycles and struggles of high-technology capitalism. Chicago: University of Illinois Press.

Federal Communications Commission (FCC) (2012a) ‘Proposed certification test procedures for TV band (white space) devices authorized under subpart H of the part 15 rules’. [http://apps.fcc.gov/eas/comments/GetPublishedDocument.html?id=223&tn=19463].

Federal Communications Commission (FCC) (2012b) Allocation chart 2012. [http://transition.fcc.gov/oet/spectrum/table/fcctable.pdf].

Forde, T., I. Marcluso and L. Doyle (2011) ‘Exclusive sharing and virtualisation of the cellular network’, New Frontiers in Dynamic Spectrum Access, IEEE: 337-348.

Forge, S., R. Horowitz and C. Blackman (2012) ‘Perspectives on the value of shared spectrum access’, Final report for the EU Commission. [http://ec.europa.eu/information_society/policy/ecomm/radio_spectrum/_document_storage/studies/shared_use_2012/scf_study_shared_spectrum_access_20120210.pdf].

Fuchs, C. (2009) ‘Information and communication technologies and society: A contribution to the critique of the political economy of the internet’, European Journal of Communication, 24(69): 69-87.

Fuchs, C. (2010) ‘Labor in informational capitalism and on the internet’, The Information Society, 26: 179-196.

Fuchs, C. (2011) ‘Article web 2.0: Prosumption and surveillance’, Surveillance & Society, 8(3): 288-309.

Fuller, M. (ed.) (2008) Software studies: A lexicon. London: MIT Press.

Galloway, A. (2004) Protocol: How control exists after decentralization. London: MIT Press.

Galloway A. and E. Thacker (2007) The exploit: A theory of networks. Minneapolis: The University of Minnesota Press.

Grammatis, K. (2011) ‘No Internet in Egypt? We can fix that’, Huffington Post 9. [http://www.huffingtonpost.com/kosta-grammatis/no-internet-in-egypt-we-c_b_815765.html].

Hardin, G. (1968) ‘The tragedy of the commons’, Science, 162: 1243-1248.

Hardt, M. (2010) ‘The common in communism’, Rethinking Marxism, 22(3): 346-356.

Hardt, M. and A. Negri (2009) Commonwealth. Cambridge, Massachusetts: Belknap.

Harvey, D. (2001) ‘The art of rent: Globalization, monopoly and the commodification of culture’, Socialist Register, 38: 93-110.

Hazlett, T. and E. Leo (2010) ‘The case for liberal spectrum licences: a technical and economic perspective’, George Mason Law & Economics Research Paper, 10(19).

Herzel, L. (1951) ‘Public interest and the market in color television regulation’, University of Chicago Law Review.

Higginbotham, S. (2010) ‘Spectrum shortage will strike in 2013’, gigaom. [http://gigaom.com/2010/02/17/analyst-spectrum-shortage-will-strike-in-2013/].

Kelly, K. (2009) ‘The new socialism: Global collectivist society is coming online’, Wired. [http://www.wired.com/culture/culturereviews/magazine/17-06/nep_newsocialism?currentPage=all].

Kittler, F. (1995) ‘There is no software’, ctheory. [http://www.ctheory.net/articles.aspx?id=74].

Kluitenberg, E., S. Jorinde, and L. Melis (eds.) (2007) Open 11: Hybrid space. Amsterdam: NAI Publishers.

Lakoff, G. and M. Johnson (2003) Metaphors we live by. New York: University of Chicago Press.

Lessig, L. (2004) Free culture: How big media uses technology and the law to lock down culture and control. London: Penguin Press.

Lessig, L. (2006) Code: Version 2.0. London: Basic Books.

Lukács, G. (1971) ‘Reification and the consciousness of the proletariat’, in History and class consciousness. London: Merlin Press.

Manzerolle, V. (2010) ‘Mobilizing the audience commodity: Digital labour in a wireless world’, ephemera: theory and politics in organization, 10(3/4): 455-469.

Marazzi, C. (2007) ‘Measure and finance’, presented at ‘Measure for measure: A workshop on value from below’, Goodenough College, London, United Kingdom, 21 September.

Marcus, B.K. (2004) ‘The spectrum should be private property: The economics, history, and future of wireless technology’, in Essays in political economy. Alabama: Ludwig Von Mises Institute.

Marcus, M.J. (2010) ‘Cognitive radio under conservative regulatory environments: Lessons learned and near term options’, in New Frontiers in Dynamic Spectrum Access, IEEE: 1-5.

Moulier-Boutang, Y. (2012) Cognitive capitalism. London: Basic Books.

Napoleoni, C. (1956) Dizionario di economia politica. Milan: Edizioni di Comunità.

O’Dwyer, R. and L. Doyle (2012) ‘This is not a bit-pipe: A political economy of the substrate network’, Fibreculture, (20): 10-35.

OFCOM (2010) ‘United Kingdom frequency allocation table 2010’ [http://stakeholders.ofcom.org.uk/binaries/spectrum/spectrum-policy-area/spectrum-management/ukfat2010.pdf].

Pasquinelli, M. (2008) Animal spirits: A bestiary of the commons. Rotterdam: NAI Publishers.

PCAST (2012) ‘Report to the president realizing the full potential of government-held spectrum to spur economic growth’. [http://www.whitehouse.gov/sites/default/files/microsites/ostp/pcast_spectrum_report_final_july_20_2012.pdf].

Rheingold, H. (2002) Smart mobs: The next social revolution. Cambridge MA: Basic Books.

Sandvig, C. (2006) ‘Access to the electromagnetic spectrum is a foundation for development’, in M. Harvey (ed.) Media matters: Perspectives on advancing governance and development. Paris: Internews.

Smythe, D. (2001) ‘The audience commodity and its work’, in M.G. Durhman and D.M. Kellner (eds.) Media and cultural studies keyworks. Oxford: Blackwell Publishing.

Song, S. (2011) ‘Village Telco and OpenBTS networks: Technology overview and challenges’ article posted 14-12-2011 at 14:00 to ictworks. [http://www.ictworks.org/tags/openbts].